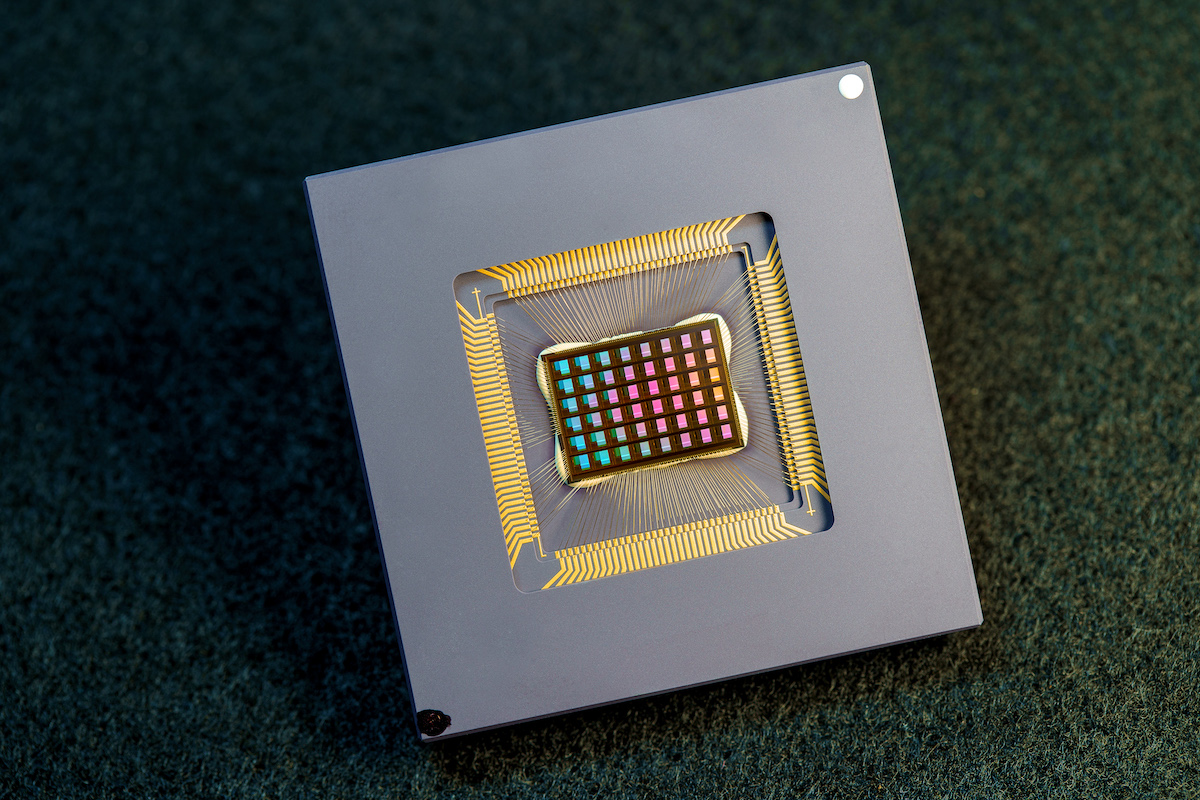

The race to merge mind and machine just hit a major milestone. Today, neurotech company NeuroLink Systems announced the release of NeuroLink Lite, the first commercially available, non-invasive brain-computer interface (BCI) headset designed for everyday use.

Weighing just under 200 grams and styled more like a sleek pair of over-ear headphones than a medical device, NeuroLink Lite allows users to control apps, type messages, and even draw images—using only their thoughts.

Mind Over Machine

Unlike previous BCI efforts that required surgical implants or bulky lab equipment, NeuroLink Lite relies on ultra-sensitive dry electrodes and AI-powered signal interpretation to decode neural activity from the surface of the scalp. The device connects via Bluetooth and is compatible with phones, tablets, and desktops.

In demos shown to the press, users navigated basic UI menus, opened and closed applications, and drafted short texts—all without lifting a finger. Commands are issued by focusing on intent alone, with machine learning algorithms adapting over time to the user’s unique brainwave patterns.

“This is the dawn of neural computing,” said CEO Thalia Nguyen at the launch event in San Francisco. “Our goal was to make the brain a controller—and to do it without surgery, wires, or complexity.”

Accessibility Meets Innovation

One of the most exciting prospects for NeuroLink Lite is its potential impact on accessibility. For users with mobility impairments, the headset offers a new level of independence, allowing control of digital devices with ease. Nguyen emphasized that accessibility features were central to the headset’s design, including customizable UI overlays for different motor and cognitive needs.

The device is also being piloted in educational environments, where early tests suggest students using NeuroLink Lite show faster response times during language-learning and memory tasks, thanks to the device’s neurofeedback capabilities.

Privacy & Ethical Questions Loom

Still, the arrival of thought-controlled consumer tech comes with serious questions. Privacy advocates warn that as devices begin interpreting brain activity, it becomes critical to regulate what data is collected, where it’s stored, and how it’s used.

NeuroLink Systems has stated that all processing happens locally on the user’s device, with no neural data stored in the cloud by default. Users can opt in to anonymous data sharing to improve AI training models, but the company insists this feature is off by default.

“Ethical design is non-negotiable,” said Nguyen. “Our mission is to empower, not exploit.”

On Sale This Fall

The headset will be available to the public in October, starting at $499, with early access given to research institutions and assistive tech partners. NeuroLink Systems is also opening an SDK for developers to build custom BCI-compatible apps, hinting at a potential new category of “neuro-native” digital experiences.

While NeuroLink Lite is still limited to basic commands and app interactions, the implications are enormous. As BCI tech continues to evolve, we may be witnessing the first steps toward a world where keyboards, touchscreens—and even voice commands—are no longer necessary.

“Typing was step one. Touch was step two. Thought is step three,” Nguyen said. “And step three starts now.”